HEC Montréal: Deployment of a Large-Scale Mail Installation

Over the past few years, e-mail has grown into one of the most important communication mediums. Naturally, e-mail infrastructures must be fast, secure and reliable. Ideally, they also should be able to integrate easily and effectively with anti-unsolicited bulk e-mail (UBE) solutions.

HEC Montréal is Canada's first management school, founded in 1907. More than 11,000 students and 220 professors use HEC's e-mail system every year, and alumni keep their e-mail accounts after graduation. Unfortunately, the proprietary e-mail system did not evolve and as the load started to increase, the infrastructure could no longer keep up with requirements.

The previous mail infrastructure at HEC Montréal was based on four IBM AIX servers running Netscape Messaging Server 4.15. Each of those servers offered all services (IMAP, POP3, SMTP and Webmail access) for a subset of users. The system simply did not scale to current mail requirements. According to Eddy Béliveau, Senior Network Analyst at HEC Montréal:

We found ourselves with mail server software that had not been upgraded in the last two years because the AIX platform was no longer supported by Sun/iPlanet/Netscape, which owned the mail server software. We had a regular increase of our e-mail traffic during the last 12 months due to the presence of UBE and viruses trying to replicate themselves. We got peaks of over 100 concurrent SMTP connections, which was too much for our servers; the typical load average was over 50 on all servers. We could not, on our old 133MHz servers, execute any anti-virus or anti-UBE applications, not even a simple RBL filtering policy. Thus, we had to re-examine the hardware and software architecture of our e-mail system but [could] not find time to install alternatives. We were like a dog running after his tail trying to stabilize the situation.

HEC Montréal contacted us at Inverse, Inc., to help them replace the mail infrastructure and deploy a better alternative.

The proposed solution was driven by the following factors:

Cost: HEC Montréal could not afford a per-user license fee for 35,500 users.

Ease of maintenance: the infrastructure had to be easy to manage. Accounts creation and destruction should be automated, updates should be easy to apply and the infrastructure should let HEC Montréal leverage the expertise they have.

Security: the components of the solution should have a proven security track record.

Robustness: the components should be mature and should have been used in production environments for months. Furthermore, the development should be active to accelerate bug fixes, feature enhancements and security updates.

Scalability: the solution must meet its purpose for many months, because the number of users grows by 2,000–3,000 every year. Its architecture also should allow adding extra servers to distribute the load or offer more redundancy.

When we were first approached, HEC Montréal was leaning toward a Linux-based solution running Novell NetMail 3.1. Having great experience with free alternatives, we decided to compare the solution we had in mind with Novell's offerings.

That said, we built two identical test environments using Red Hat Linux 9 and installed NetMail 3.1 on one and our proposed solution on the other. Next, we performed a series of stress tests in order to measure the stability and the performance of the two solutions. The tests were performed with two benchmarking utilities, postal and tm. The results showed that while NetMail was the fastest for POP3 operations, it proved to be the slowest in the IMAP and SMTP tests. It also had a lot of stability issues when overloading the server with IMAP requests.

Combined with our experience, we proposed a solution based almost entirely on open-source components. We started with a standard Red Hat Linux 9 distribution using Silicon Graphics, Inc.'s XFS kernel packages. We included Cyrus IMAP and Cyrus SASL, which included IMAP, LMTP and POP3 dæmons as well as authentication libraries and redirection/vacation scripts support using Sieve. Next, Postfix, AMaViS, SpamAssassin, Vipul's Razor and NAI VirusScan were added to build a complete SMTP server solution with enhanced tools to limit the delivery of UBE and viruses. Apache, PHP4, IMAP Proxy and SquirrelMail provided a complete Webmail solution. OpenLDAP was added to store all information regarding users' accounts (e-mail address and aliases, SquirrelMail preferences and so on), as well as other specific attributes of HEC Montréal. Finally, we installed Linux HA Heartbeat, software used to monitor the health of some nodes on the network.

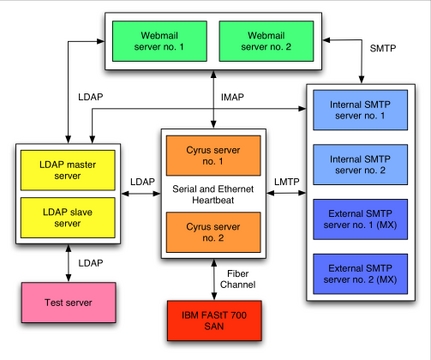

The new infrastructure is running on 11 IBM eServer xSeries x305 and x335 servers. The two x335s are connected to an IBM FAST 700 Storage Array Network (SAN) using Fibre Channel, where the mailstore resides. The XFS filesystem is used for the mailstore in order to maximize file access operations. Figure 2 depicts the architecture.

Four STMP servers running Postfix are used: two of them are mail exchangers (MXes) for the HEC Montréal domains and the other two serve internal mailing needs. These servers also use AMaViS, SpamAssassin, Vipul's Razor and Network Associates' VirusScan to limit the delivery of UBE and viruses. Furthermore, two Cyrus IMAP servers are connected using serial and Ethernet cables for high availability. Only one Cyrus IMAP server is active at any moment; it serves all POP3 and IMAP connections, stores mails on the SAN (received using the LMTP protocol from the four Postfix servers) and processes Sieve scripts.

Two Webmail servers run Apache, PHP4, SquirrelMail and IMAP Proxy. The latter is used to cache IMAP connections between SquirrelMail and the Cyrus IMAP server in order to minimize the load (authentication and process forks) on the mailstore. Finally, one other server is used only for testing purposes. That is, any modifications to the infrastructure must go through this server, which is configured to run every component, before being applied to the environment in production.

With regard to the UBE filtering, we check mail at many levels to ensure we block as many as we can. Our checks include carefully chosen real-time blackhole lists (RBLs); header and MIME header checks using up-to-date maps from SecuritySage, Inc.; and content filtering initiated from AMaViS using SpamAssassin, Vipul's Razor for UBEs analysis and VirusScan for viruses.

This solution has proven to be greatly effective and produces few false positives. The system also was built with load balancing and failover in mind. The SMTP and the Webmail servers are used in a round-robin fashion, efficiently distributing the load among all of them.

The main Cyrus server has an identical backup server in case of failure. The latter is connected to the main Cyrus server and uses Heartbeat to monitor the availability of the server. In case of a failure (hardware problem, operating system crash and so on), the secondary Cyrus server takes over all services. Heartbeat automatically mounts the mailstore (located on the SAN), activates the network alias and starts all Cyrus services. This offers a warm switch-over that minimizes the outage time; sometimes it's not even noticeable.

Finally, the LDAP system offers a master node together with a slave that replicates the former using slurpd. All services are configured to failover automatically to the slave in case of a failure on the master node. Some services also are configured to use the slave as the master node in order to distribute the LDAP load among both servers; they failover to the master node.

After putting the 11 servers for the new infrastructure in place, one of the remaining challenges was to migrate all users from the old infrastructure to the new one. About 35,500 users, 82,500 mailboxes and hundreds of thousands of messages (35GB of mail) had to be migrated. Furthermore, redirection scripts and vacation messages also had to be converted, and information such as preferences from the previous Webmail system had to be kept intact. In order to do this, we created a set of Perl scripts to take care of the entire migration in a way that would appear seamless for the users:

LDAP Init: populates the new LDAP server (based on OpenLDAP) using the values from the previous LDAP server (based on Netscape iPlanet). Included attributes are e-mail addresses and aliases, special folders and signature preferences for Webmail.

Create Users: creates all user accounts about to be migrated.

Load Sieve: creates Sieve scripts and uploads them to the mailstore by reading attributes from the previous LDAP server. Sieve scripts are used for automatic redirections and vacation messages.

Copy Mailboxes: copies all mailboxes for the users being migrated. All message flags are kept intact. The IMAP protocol is used a lot in this script. This script also updates the mailHost attribute on both LDAP servers so the mails are routed to the correct destination mailboxes.

Update Mailboxes: run the morning after the migration to move the remaining (if any) messages in the users' mailboxes. Mail could have been stuck in the queue of the SMTP servers, before the users' mailHost attributes were changed.

To minimize service interruptions for the users, we ran the scripts in the order listed once classes were finished at the end of the day. Few messages were rejected during the import process; those that were simply were retried by the source SMTP servers. In total, four nights were required to migrate all the information. Running the scripts took from four to seven hours, depending on the number of users located on each source server and the execution speed, which was mainly limited by the performance of the old AIX servers.

After the migration, we extensively monitored all services in order to discover any problems. As expected, we didn't have many. We mainly tuned the minimum preforks of Cyrus processes as well as their respective maximum children. We also tuned the SMTP servers for the default process limits and preforks for AMaViS. We also used temporary LDAP queries during the migration, so we had to replace them with optimized ones once the migration finished.

During a typical day, HEC Montréal receives over 125,000 e-mails, and 60% to 80% of the traffic is composed of UBEs. The internal SMTP servers also manage thousands of messages sent by users, distribution lists or other systems. About 300,000 POP3 connections (from 5,500 different users) and 60,000 IMAP connections (from 5,000 different users) are initiated every day on the main Cyrus server. Peaks of 225 concurrent IMAP connections and 50 concurrent POP3 connections frequently are encountered.

As mentioned earlier, the anti-UBE policies in place have proven to be effective. During the first week after the migration, the two mail exchangers blocked more than 600,000 unsolicited bulk e-mails. The week after, spammers were less aggressive and the systems blocked over a quarter of a million messages. The most effective policy is the RBL checks, followed by the content filtering checks (using SpamAssassin and Vipul's Razor) and, finally, the header and MIME header checks.

To extract those statistics, we installed Spamity, which parses mail logs from the four Postfix servers and updates a PostgreSQL database running on the test server. Thereafter, users or administrators can examine the mail that was blocked by anti-UBE policies by using a simple Web browser. Users also can perform searches for specific e-mail addresses or domain names and filter the results by anti-UBE policies.

As you have seen in this article, migrating from a proprietary solution to an open-source solution was a challenge. According to Emmanuel Vigne, Information Systems Director at HEC Montréal:

The key business benefits are huge, as we nearly eliminated UBE and greatly enhanced the architecture of our mail infrastructure. We moved from an architecture where all services were offered by four servers to an architecture where the services are offered by many servers. That allows us to minimize any potential outage and scale as the number of users grow. In case of a failure, only one specific service is affected, contrary to the situation before where thousands of users could no longer use the e-mail service in case of a single server failure.

Putting this new infrastructure in place allowed us to contribute to the Open Source community by developing a set of patches to correct bugs and/or add features to most components we installed.

As with any other system, this one will evolve over time. Interesting anti-UBE technologies are emerging, such as Sender Policy Framework (SPF) [see page 50] and Spamhaus Exploits Block List (XBL), and a new stable version of Cyrus is available with NNTP and mailbox annotations support. In addition, Postfix 2.1 is coming along nicely and should offer excellent connection/rate control with its new anvil server.

Finally, as this article was being written, a mirroring solution was being deployed for the SAN. This should offer storage redundancy and eliminate the single potential point of failure in the current infrastructure.

Resources for this article: /article/7456.

Ludovic Marcotte (ludovic@inverse.ca) holds a Bachelor's degree in Computer Science from the University of Montréal. He currently is a software architect for Inverse, Inc., an IT consulting company located in downtown Montréal.